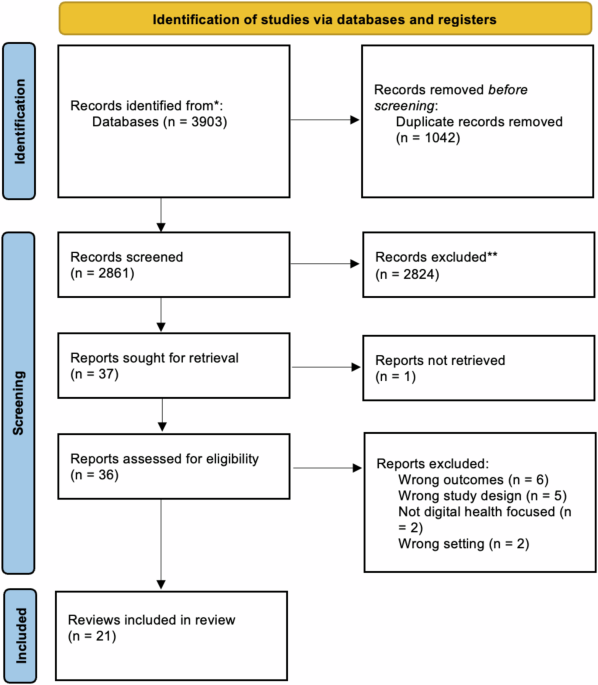

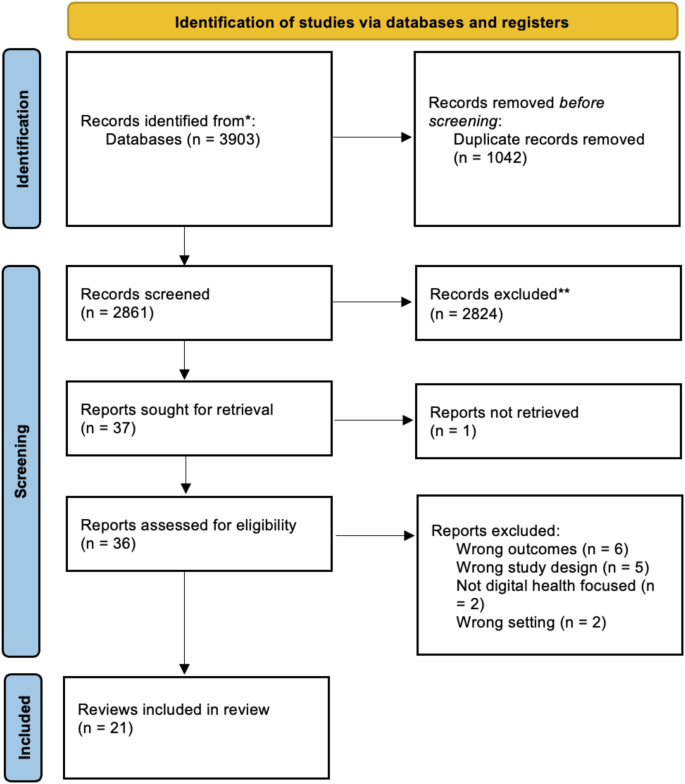

Our search identified 3903 titles and abstracts. After excluding duplicates, we screened 2861 for inclusion and then 37 full-text articles. Twenty-one were included in the final analysis (Fig. 1). Reasons for exclusion included outcomes not matching inclusion criteria (n = 6), wrong study design (n = 5), not digital health focused (n = 2) and wrong setting (n = 2).

This flowchart, adapted from the PRISMA 2020 Flow Diagram, shows the number of records identified from the search (2861 non-duplicative records), the number of records excluded based on title and abstract (2824, and the number of studies excluded based on the full article review (15), and the reason for exclusions. Twenty-one reviews were included in this analysis.

Study characteristics

Table 1 presents the characteristics of included reviews. Reviews were published between 2015 and 2023, most often in Australia (n = 4)15,16,17,18, United Kingdom (n = 2)19,20, Canada (n = 2)21,22, United States (n = 2)23,24, the Netherlands (n = 2)25,26 and Denmark (n = 2)27,28. As shown in Fig. 2, papers were recent, most often published in 2022 (n = 6)19,23,25,29,30,31, 2021 (n = 3)17,32,33, 2020 (n = 3)26,27,20, 2019 (n = 3)18,21,24. Most reviews identified as systematic reviews (n = 11)16,17,19,23,24,26,30,31,32,34,35 or scoping reviews (n = 6)18,21,25,27,29,28. Other review types include literature reviews (n = 2)15,32, rapid review (n = 1)22, and practitioner review (n = 1)20. Reviews identified between 935 and 433 studies19. Authors referred to interventions in their reviews as digital health (n = 6)19,21,28,31,32,20, mobile health (n = 6)17,18,22,30,31,35 or electronic health (n = 3)23,25,26. Other classifications included serious digital gaming interventions (n = 1)34, information communication technologies (n = 1)24, assistive technology (n = 1)27, technology-based interventions (n = 1)16, health-related technology (n = 1)33 and a combination of digital and mobile health (n = 1)31.

This bar graph shows the number of studies (y-axis) published per year (x-axis). The cumulative number of reviews is also depicted by the line through the bar graph.

Most commonly, reviews focused on co-design of digital intervention targeted towards individuals with chronic conditions (n = 8)18,29,21,27,28,30,32,20 and a combination of individuals with chronic conditions and health promotion in healthy individuals (n = 8)15,16,17,23,24,26,33,35. Further, one review identified studies focused broadly on acute and chronic conditions and health promotion in healthy individuals (n = 1)19, health promotion in healthy individuals (n = 1)34 and a combination of acute and chronic conditions (n = 2)22,31. Finally, one review did not report on the specific conditions included25.

A variety of terms were used to refer to co-design including co-design (n = 3)17,22,33, human factor approaches (n = 1)15, participatory design (n = 3)26,29,34, community-based participatory research (n = 1)35, human-centered development (n = 2)25,30, patient-centered methods for design and development (n = 1)24, involvement of end-users in design and/or test phases (n = 1)27. The remaining studies used multiple terms to describe co-design throughout their reviews (n = 9)16,18,19,21,23,28,31,32,20.

Reviews most frequently (n = 17)15,16,17,18,22,23,24,25,26,27,28,30,31,32,33,20,35 aimed to summarize or synthesize the current state of co-design approaches used in their identified interventions and health conditions. In addition to synthesizing the current state of co-design activities, four reviews provided more specific goals including the study by Henni et al.29, who aimed to investigate the needs and perceived barriers of people with impairments as they pertained to user engagement with digital health interventions29.

Co-design participants

Most reviews (n = 19) included a range of co-design participants such as patients, caregivers, healthcare professionals, policy makers, teachers, and behavior specialists. The remaining two reviews24,33 reported on studies which only involved patients in co-design processes. Ten of 2118,29,23,26,27,28,30,32,34,35 reviews discussed co-design session sample sizes and, when reported by included studies, these ranged from 232 to 100035 participants.

Reviews reported on engaging patients ranging from children to older adults (n = 7 )15,29,22,24,25,31,34, older adults (≥60 years) (n = 6)18,23,28,30,32,33, adolescents to adults (n = 2)17,35, children and young people (n = 2)16,20, children (≤18 years) (n = 1)21, adults (n = 1)27, or did not provide data on participant ages (n = 2)19,26. Specific participant age ranges or definitions of what was meant by terms such as ‘children’ were often not reported. A single review presented data on study participant race and ethnicity35 and three reported on gender or sex16,29,35. The review by Eyles et al., which reported on race and ethnicity and gender or sex emphasized that in their identified studies information on age, gender and socioeconomic position of participants and stakeholders was generally poorly reported35.

Co-design activities

All reviews identified described the co-design activities used by included studies, with wide variation in the level of detail provided. Surveys were the most frequently used quantitative approach to enable co-design; reported in 11 reviews15,17,21,23,24,25,28,32,20,34,35. Focus groups and interviews were also frequent; reported in 1715,17,18,19,29,22,23,24,25,27,28,30,20,32,33,34,35 and 1415,17,19,29,22,23,24,25,27,28,30,20,32,34 of reviews respectively. Other qualitative approaches reported in the reviews were observation (n = 10)15,17,21,23,27,28,30,31,33,35 and think-aloud strategies (n = 6)18,19,23,31,33,20. Various creative co-design activities were also reported, including storyboarding (n = 6)16,17,26,30,20,35, persona/scenario building (n = 6)16,17,25,26,30,31, drawing (n = 2)33,20, photos/video elicitation (n = 4)18,29,33,35, storytelling (n = 2)31,20, and role-playing (n = 1)26. Where digital prototypes were included in co-design, they were most frequently 2D or paper-based models (n = 6)15,17,18,26,30,33, wireframes (n = 3)17,30,20, or web-based software (n = 2)33,20. Reported intervention evaluations were iterative usability testing (n = 8)15,17,18,29,22,25,28,30, digital health intervention-embedded engagement metrics including app-tracking (n = 2)15,20, pilot testing (n = 4)17,27,30,33, and living laboratories (n = 3)15,27,33.

The locations where co-design activities were conducted was discussed in seven reviews18,23,27,30,32,20,35. Of reviews reporting on co-design location, one found that of the 25 studies identified, only 11 reported a specific setting. These settings were laboratories, clinics, homes, community, senior centers, and virtual; however, the specific number of studies reporting each location was not provided23. The remaining reviews provided scant detail on locations.

Reporting on co-design frequency, duration and degree of participation

The duration and frequency of co-design sessions was reported by less than half of the reviews; with only eight18,24,27,30,32,33,34,35 discussing session duration and seven18,27,28,32,33,34,35 reporting session frequency. Within those studies, frequency and duration were reported in varying detail. Most reviews reported a handful of examples from the studies they identified, including a 2-h collaborative design workshop or a half-day co-design workshop33. Reviews by Eyles et al.35 and Woods et al.18 reported that the studies they identified provided inadequate descriptions of both session duration and frequency.

Seventeen of the studies15,16,17,19,21,22,23,24,25,26,27,28,30,31,20,33,35 made attempts to distinguish during which part of intervention pre-design, development, evaluation and post-design participants were included in. The review by Cole et al.23 rated level of co-design participation using a framework36 with ratings being “informed”, “consulted”, “involved”, “in collaboration as a co-leader” and “empowering oneself and others”. Of the 25 studies, Cole et al. states that most involved the first three levels of participation. The review by Orlowski et al.16 also made attempt to classify participant involvement in co-design through categorizing studies using several different concepts drawn from participatory based research16. Overall, they found that 70% of projects reported predominantly consultative consumer involvement16.

Twelve of the reviews15,17,18,21,24,25,26,28,30,32,20,35 provided details on the aspects of the intervention that end-users participated in co-designing, although to varying degrees. For example, the review by Bevan Jones et al. highlighted a study in which discussions with youth patient partners focused on illustrations, characters, scripts and animation for the digital health intervention20. The study by Wegener et al. provided a detailed list of specific contributions older participants provided to identified intervention development including content of applications and how censors should be worn28.

Frameworks used to guide co-design and review conduct

Thirteen reviews16,17,18,21,22,23,24,25,26,28,30,31,35 aimed to identify frameworks or theories which underpinned intervention development including behavioral change, intervention development and co-design frameworks. Of these thirteen reviews, all except one identified frameworks used in studies21. Thirty-two frameworks, models or theories related to co-design were described by reviews. The most used were variations of the participatory design (PD) method, user-centered design and human-centered design frameworks. Authors of reviews also used co-design frameworks or models to synthesize results, with ten of 2117,18,21,23,25,26,32,33,34 reporting such use.

Evaluation of co-design

Co-design was evaluated in terms of (1) overall effectiveness and (2) participants’ views of the process. Reviews reported that quantitative evaluation of co-design effectiveness was overall challenging and only one review provided a meta-analysis34. This meta-analysis did not support the notion that digital games developed with participatory design improve health outcomes more than those not co-designed34. The review by Vandekerckhove et al.26 reported a series of outcomes including eHealth development (number of ideas for development), eHealth quality (usability, feasibility) and user outcome (effectiveness) which were reported in their identified studies26. Qualitative reports on the potential for co-design to improve digital health intervention utility were reported by three reviews19,21,24. These reviews stated that an end-user advisory group can lend valuable insights into intervention content and structure, making interventions more user-friendly and feasible to implement21,24 and that adoption of participatory approaches to the design of eHealth interventions and the use of personalized content enhances overall system effectiveness19. Five reviews16,22,26,32,20 reported on participants’ views on participating in co-design and overall reported high levels of satisfaction; however, most of these reviews emphasized that this was an infrequently assessed quality metric in identified studies.

Co-design barriers and challenges

Nine reviews16,18,19,22,25,28,30,33,20 reported on challenges to co-design of digital health interventions. Power imbalances between researchers and participants were amongst the most cited barriers to co-design conduct. Additional barriers included time and financial constraints, costs, difficulty recruiting participants particularly participants from a minority or vulnerable group, participant “groupthink” at co-design sessions, and the thoughts of medical and health professionals being privileged than that of patients. Two reviews reported barriers to specific co-design strategies25,20 which included perceived inadequacies of surveys and questionnaires in exploring complex issues as well as difficulties participants faced in freely talking to strangers in new settings, including in focus groups20. The review by Sumner et al.33 listed barriers to successful co-design of digital health interventions and proposed subsequent strategies to address them. These strategies included building relationships and trust, empowering the end-user, building end-user knowledge, and establishing value and interest33. It was suggested that lacking buy-in from researchers and participants, as well as issues with recruitment, could be addressed through conducting co-design in environments familiar to participants33.

Accessibility and equity

Eight reviews reported on accessibility and equity29,21,22,26,28,30,20,35. Bevan Jones et al. identified a study which discussed the inclusion of cultural advisors and hosting formal design opening and closing sessions with community elders in the Maori and Pacific Islander populations20. Identified strategies to recruit vulnerable populations discussed in reviews included using a proactive outreach approach which involved using a combination of approaching and recruitment strategies. Other reviews highlighted that not embedding equity and accessibility principles in co-design of digital health interventions risked worsening the digital divide21 and design failures if developer biases and stereotypes related to certain groups, such as older adults, were embedded in products30. A handful of the reviews identified focused specifically on improving co-design in vulnerable populations including children with special health care needs and their families21, people with impairments29, people with dementia27 and older people with frailty or impairment28.

Review quality appraisal

All twenty-one reviews were classified as critically low quality. Very few studies met the requirements for questions one (n = 2)33,34, seven (n = 2)22,26, nine (n = 1)33, 10 (n = 1)16, 11 (n = 1)34, and 13 (n = 1)30. No studies met the requirements for questions 12 and 15. For additional details and full questions see Table 2.

link